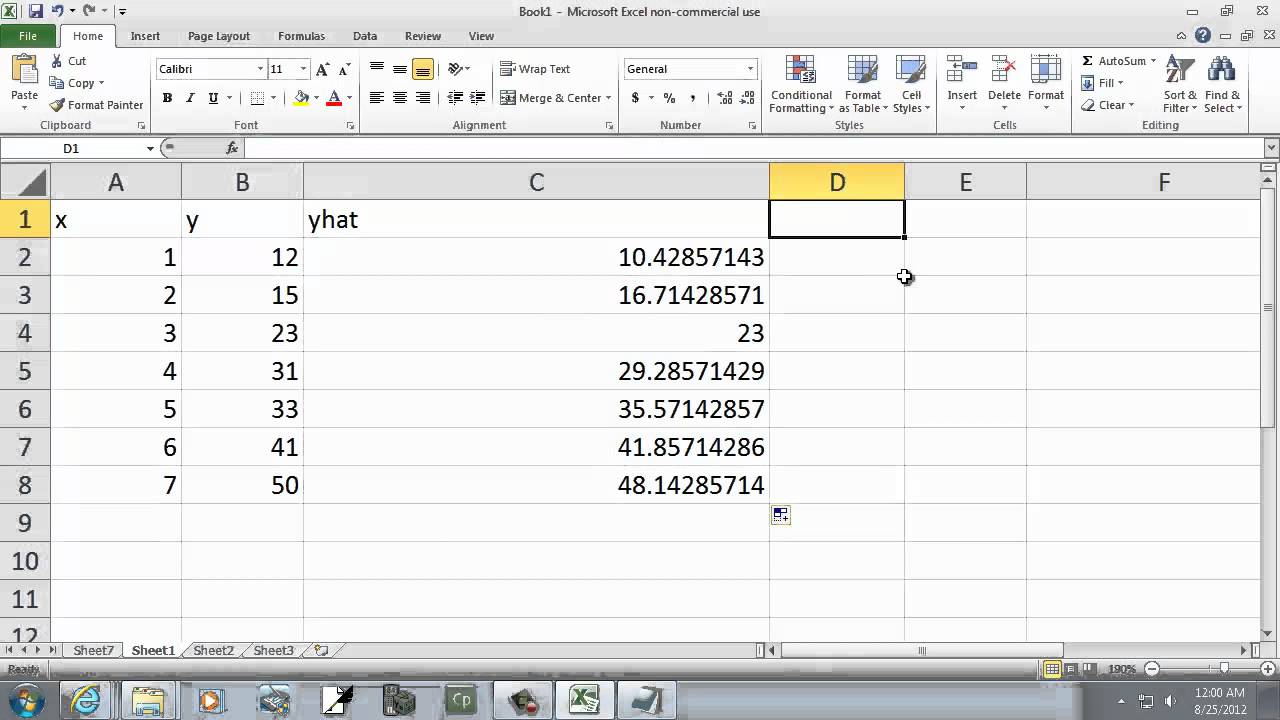

It measures the overall difference between your data and the values predicted by your estimation model (a residual is a measure of the distance from a data point to a regression line). In a sense, the residuals are estimates of the errors. The residual sum of squares is used to help you decide if a statistical model is a good fit for your data. Residuals are the observable errors from the estimated coefficients. This can be done graphically or by dividing the residual sum of squares into lack of fit and pure error components and performing a significance test for lack. The simplest application of OLS is fitting a line. It gives a way of taking complicated outcomes and explaining behaviour (such as trends) using linearity. Information and translations of residual sum of squares in the most comprehensive dictionary definitions resource on the web. If the change in RSS is negative (which is possible when you use the validation set), then the change is set to 0. Ordinary least squares (OLS) is the workhorse of statistics. Residual sum of squares: Sum of the squared differences between the actual Y and the predicted Y, or, RSS e2 Intuition: RSS tells us how much of the. Residual Sum of Squares Importance Method. Residual sum of squares using proportion of variance formula is defined as a measure of variation or deviation from the mean. In statistics, the residual sum of squares (RSS), also known as the sum of squared residuals (SSR) or the sum of squared errors of prediction (SSE), is the sum of the squares of residuals (deviations of predicted from actual empirical values of data).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed